With Terraform making its way as a popular IaC tool to define and provision the complete infrastructure, there has been a surge in deploying this tool since its inception in 2014.

In case you haven’t figured out which IaC tool to deploy, check out our previous blog to know how to choose between CloudFormation and Terraform.

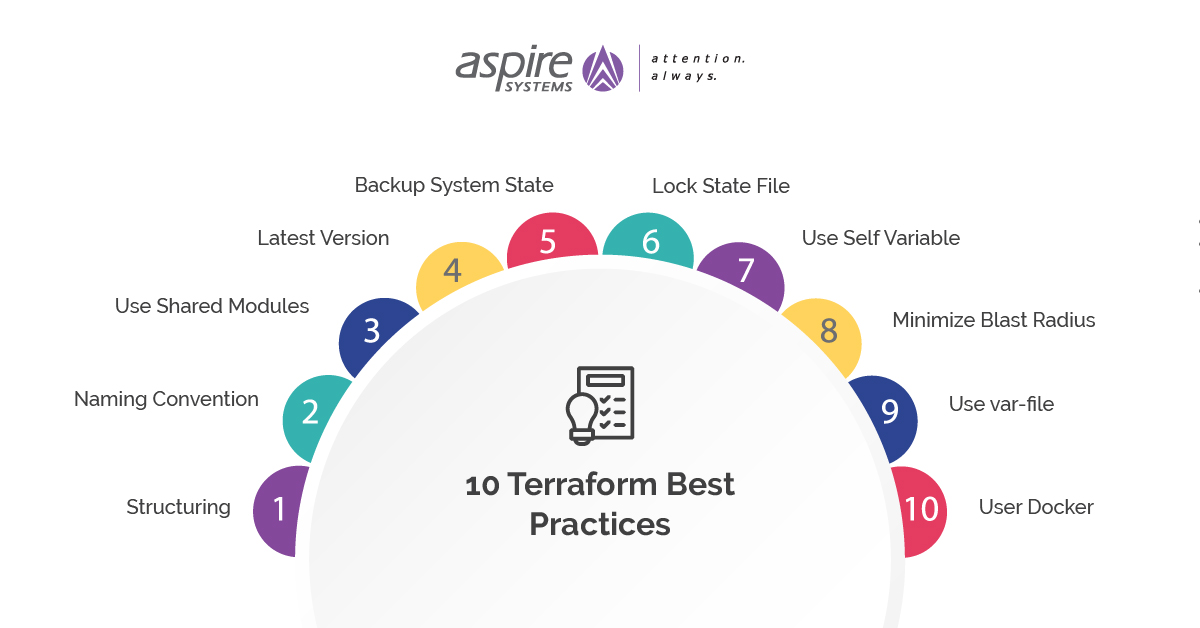

If your organization has started leveraging Terraform, ensure you follow these best practices for better infrastructure provisioning.

![10 Terraform Best Practices]() 1. Structuring

1. Structuring

Whenever you deploy Terraform on a large production infrastructure project, a proper directory structure needs to be followed to meet the complexities in the project. The best practice would be to assign individual directories for different purposes.

For instance, if you are deploying Terraform in development, staging, and production, you must have separate directories for each of them.

Even the configurations must be unique for each of them, because the configurations of a growing infrastructure will be complex.

For instance, you can write all your terraform codes inside the main.tf file, but having separate codes for variables and outputs makes it easier to read.

2. Naming Convention

Naming conventions make the codes easily understandable. For instance, let’s say you need 3 different workspaces for development, staging, and production. Instead of naming them env1, env2, and env3, you should name them dev, stage, and prod.

Similar conventions must be used for resources, variables, modules etc.

3. Use Shared Modules

We strongly advise you to use official Terraform modules. Terraform registry has a plethora of modules readily available and you can refrain from reinventing a module that already exists.

Also, each module should focus only on one aspect of the infrastructure, such as creating an AWS EC2 instance.

4. Latest Version

New functionalities keep cropping up in the Terraform development community and we recommend you to stay up to date with the latest version of Terraform as and when it releases.

If you skip a major release or two, upgrading will be quite a challenge.

5. Backup System State

Always ensure you backup the state files of Terraform.

Stored locally inside the workspace directory, these files keep track of all the metadata and resources in the infrastructure.

Without these files, Terraform will not be able to decipher the resources in the infrastructure.

6. Lock State File

There have been instances where more than one developer tries to run the terraform configuration simultaneously. This has led to corruption of the terraform file or even data loss. The locking mechanism avoids these scenarios by making sure there is only one developer working on the terraform configurations at a given time.

When more than one user tries to access the state file, DynamoDB name and primary key are leveraged for state locking and maintaining consistency.

7. Use Self Variable

‘self’ variable is generally used when you don’t know the value of the variable before deploying an infrastructure.

Suppose you want to use the IP address of an instance which will be deployed only after terraform apply command, so you are not sure of the IP address until it is up and running.

In such cases, the ‘self’ variable comes in handy to get the IP address of the instance.

8. Minimize Blast Radius

The blast radius essentially means the degree of damage caused in case things don’t go as planned.

For example, if you are deploying terraform configurations on the infrastructure and the configuration isn’t implemented correctly, what will be the degree of damage?

In order to minimize the blast radius, we recommend you to push a few configurations on the infrastructure at a given time. Even if something goes wrong, the damage to the infrastructure will be relatively minimal.

9. Use var-file

If you don’t want certain variables in your terraform configuration code, you can create a file with extension and pass it to terraform apply command with a var-file.

It’s always recommended to pass variables for a password, secret key locally through a var-file rather than saving them inside terraform configurations or on a remote location.

10. User Docker

Always use docker containers while running a CI/CD pipeline build job. Terraform offers official docker containers. If you wish to change the CI/CD server, it is easy to pass the infrastructure inside a container.

You are also allowed to test the infrastructure on the docker containers. By integrating Terraform and Docker, you get portable, repeatable infrastructure.

Follow these best practices and implement these in your Terraform projects for better results. We’re here to make sure these best practices help you write better Terraform configurations.

Recommended Blogs:

CloudFormation vs Terraform: A Comparative Study

10 Tips for Developing an AWS Disaster Recovery Plan

AWS EKS vs ECS vs Fargate: Which one is right for you?

Top 5 AWS Monitoring and Optimization Tools

- Azure DevOps vs Jenkins: Who wins the battle? - July 28, 2023

- AWS DevOps Tools and Best Practices - May 4, 2022

- How to Build a CI/CD Pipeline in Azure? - May 3, 2022

1. Structuring

1. Structuring

Comments